Why Commercial Datasets Win Over Data Scraping for AI Training

Data is the quiet engine behind business decisions, analytics, automation, and AI. When it’s good, teams move faster, models perform better, and decisions feel less like educated guessing.

When it’s bad, everything downstream gets messy: dashboards mislead, machine learning models drift, and teams spend more time cleaning spreadsheets than solving actual problems.

That’s why we’ve already covered the question of how to collect data for AI training, and today we’re covering an equally important one: what kind of data should you trust?

For many businesses, the choice comes down to two routes: data scraping or buying data from a commercial provider. One promises flexibility and scale. The other offers structure, licensing clarity, and reliability. Both can work, but not for the same goals, timelines, or risk levels.

This piece compares buying datasets vs web scraping machine learning workflows, and explains why licensed, ready-to-use datasets are becoming the stronger long-term option for AI teams.

Discover licensed AI training datasets

TL;DR:

What is the difference between commercial datasets and data scraping?

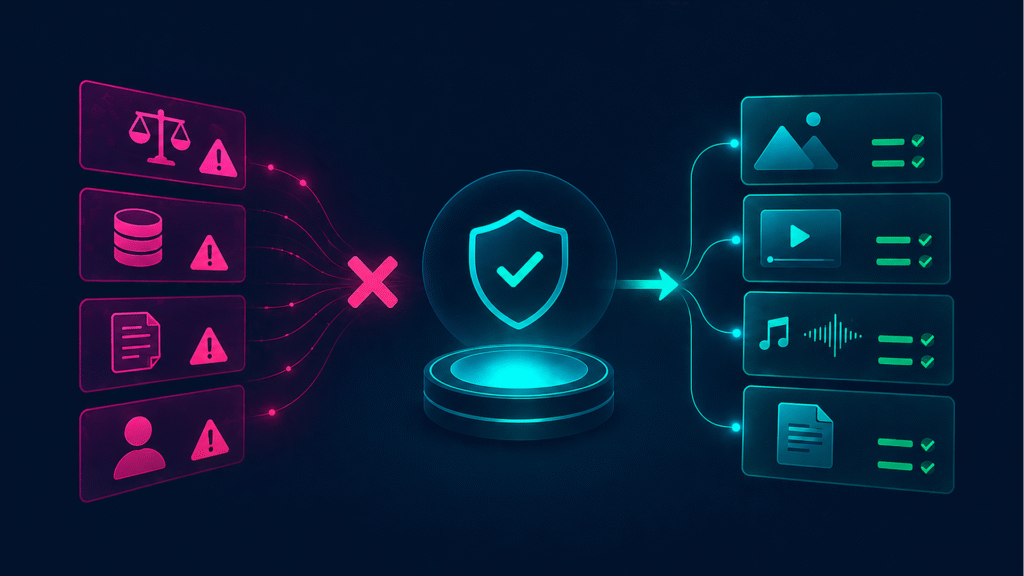

Commercial datasets are pre-collected, licensed, structured data sources designed for safe and scalable AI training. Data scraping is the automated extraction of raw data from websites, often requiring cleaning, labeling, and legal validation before it can be used in machine learning.

Why data scraping can be risky for AI training?

Scraped datasets may include unclear licensing, missing consent, inconsistent structure, or copyright-protected content. These issues can create legal, ethical, and operational risks in commercial AI systems.

What is data quality and why it matters

Data quality describes how well a dataset fits its intended purpose. For AI, analytics, and business intelligence, quality is not a “nice to have.” It’s essential as it determines whether your outputs are accurate, usable, and trustworthy.

For example, IBM defines data quality through criteria such as accuracy, completeness, validity, consistency, uniqueness, timeliness, and fitness for purpose. These dimensions help organizations evaluate whether data can reliably support governance, analytics, and operational decisions.

Why data quality is the first priority for businesses

Poor data creates expensive noise. A marketing team may target the wrong audience. A finance team may forecast from outdated records. An AI team may train a model on duplicated, biased, irrelevant, or legally vague content.

In machine learning web scraping projects, this risk is easy to underestimate. Scraping can produce a huge amount of data quickly, but volume does not equal value. If the dataset lacks clean labels, consistent metadata, clear provenance, and usage rights, the work shifts from “training the model” to “rescuing the dataset.”

For AI training, data quality affects:

- model accuracy

- bias and representational balance

- output safety

- legal and commercial usability

- long-term maintenance costs

- confidence from stakeholders, customers, and legal teams.

In simple words, the dataset becomes part of the end AI product.

Key data quality dimensions or what to evaluate in a dataset

Before choosing between buying data and data scraping, evaluate quality across five core dimensions.

? Accuracy means the data correctly represents what it claims to show. For visual AI, this could mean correct object labels, image descriptions, model release data, location tags, or content categories.

? Completeness means the dataset includes the fields, formats, and coverage needed for your use case. A computer vision dataset, for example, may need images, video, metadata, labels, releases, and category-level annotations.

⏰ Consistency means data follows a predictable structure. This matters when combining millions of files across image, video, audio, and text sources.

? Freshness means the data is current enough for your model or business need. This is especially important for trend detection, recommendation systems, and market intelligence.

?? Uniqueness means the dataset avoids unnecessary duplication. Duplicates can distort model learning, inflate dataset size, and reduce training efficiency.

DepositPhotos provides multimodal AI training data built around your unique needs: high-quality image, video, audio data, as well as conversational, 3D, and text content data from a library of 310M+ licensed files, with off-the-shelf collections, custom datasets, and relevant annotations.

? Need structured data for AI training? Explore DepositPhotos’ AI Training Data and request a custom dataset sample.

Buying vs scraping data: core differences between the two approaches

Data scraping means using automated tools to extract data from websites, platforms, databases, or public web pages. In AI, web scraping machine learning workflows are often used to collect large volumes of text, images, product data, reviews, metadata, or market signals.

So, what is AI scraping? In simple terms, it’s the use of scraping methods to collect data that may later support AI model training, fine-tuning, evaluation, analytics, or enrichment.

Buying data means acquiring datasets from a provider that has already done everything for you: collected, structured, licensed, annotated, and packaged the data for commercial use.

Here’s the practical difference:

| Factor | Buying data | Data scraping |

| Speed | Faster to activate, ready-to-use | Slower after manual setup, cleaning, and QA |

| Control | Lower if off-the-shelf; higher with custom datasets | High collection control |

| Quality | High, structured, and validated | Varies heavily by source |

| Legal clarity | Strong when properly licensed | Risky, especially at scale |

| Cost | Higher upfront, lower risks | Lower upfront, higher hidden costs |

| Maintenance | Provider can support integration and updates | Internal team owns breakages and changes |

| Best for | AI training, enterprise workflows, compliance-sensitive use cases | Research, monitoring, niche public data collection |

If your question is not “which is cheaper?” but “which gives us reliable, usable data?”, then think about sourcing data for AI training from a reliable provider.

Buying data: pros, cons, and use cases

Buying data is often the better choice when a business needs speed, structure, and confidence. This is especially true for LLM datasets, computer vision datasets, speech datasets, and multimodal AI training workflows.

Commercial datasets can be easier to evaluate before use. They may include metadata, annotations, content categories, file specifications, and licensing terms.

DepositPhotos features 330M+ images, 20M+ videos, 3M+ music and SFX files, and 200K+ design templates, along with additional content and metadata available upon request.

The main benefits of getting your data from us:

- Ready-to-use structure. Instead of scraping, parsing, deduplicating, and tagging from scratch, teams can start with prepared collections.

- Commercial safety. Licensed datasets reduce uncertainty around ownership, consent, and usage rights for secure, commercially safe development and compliance with industry standards.

- Better metadata. Good metadata improves searchability, filtering, model context, and training relevance.

- Custom options. Buying data does not always mean taking a fixed package. We at DepositPhotos offer flexible AI datasets: off-the-shelf collections and custom datasets tailored to industries, topics, and specific AI use cases. For example: generative AI, computer vision, people recognition, and speech/audio processing.

The trade-offs are clear. On-demand data can cost more upfront, and off-the-shelf datasets may not fit every niche requirement. That’s why Depositphotos has entered the chat, with flexible custom datasets for creating smart and productive AI models.

This is especially important for enterprise AI teams, where reduced legal, technical, and operational friction often justifies the investment.

Discover multimodal AI datasets

Scraping data

Scraping data can be useful when teams need highly specific, frequently changing, or publicly available information. For example, alternative data web scraping can help monitor pricing, product listings, public reviews, job posts, or market signals.

Scraping can also help build experimental datasets for machine learning web scraping projects when no ready-made source exists.

The strengths:

- Flexibility. You can target specific websites, formats, fields, or update frequencies.

- Customization. Scraping can support unusual use cases that commercial datasets may not cover.

- Lower upfront cost. Small scraping projects can begin with limited tooling and resources.

But scraping has serious drawbacks.

To scrape data from websites and other sources responsibly and at scale, teams need abundant engineering resources, parsing logic, storage infrastructure, deduplication, monitoring, and compliance review.

Websites change. Pages break. Anti-bot measures appear. Data structures shift. What worked last month may fail tomorrow.

There is also the consent and licensing question. AI training datasets scraped without consent have become a serious reputational and legal concern, especially for generative AI companies (learn more from NIST and OECD).

The EU has moved toward more transparency around training data: the European Commission’s 2025 template for general-purpose AI model providers is designed to summarize training content, list main data collections, and help copyright holders exercise their rights.

That does not mean all scraping is illegal or inappropriate. It means the risk profile is changing. Scraping without a strong legal review, documentation, and data governance process is not a serious long-term data acquisition strategy for commercial AI.

When to buy vs when to scrape data: choosing the right approach for your business

Get ready data when:

- you need an LLM training dataset or multimodal dataset quickly

- legal clarity is important

- data will be used in a commercial product

- your team lacks time for collection, labeling, and cleaning

- you need metadata, annotations, and consistent structure

- the dataset must support audits, procurement, or enterprise compliance.

Scrape data when:

- the target data is public and legally safe to collect

- “right here, right now” matters more than deep annotation

- you need narrow, custom signals

- you have engineering and legal resources for validation

- the project is exploratory, internal, or research-focused.

For many AI teams, the answer is not purely in datasets or web scraping. A mature data acquisition strategy may combine both tactics: licensed datasets for core model training and controlled pinpoint scraping for supplemental monitoring, enrichment, or market intelligence.

Legal, technical, and quality challenges in data collection

Every data collection method has friction. The difference is where that friction appears.

With scraping, teams face legal review, robots.txt policies, website terms, copyright questions, consent issues, privacy concerns, changing page structures, blocked requests, cleaning pipelines, and duplicate detection.

With buying data, teams must evaluate provider quality, licensing scope, dataset relevance, format compatibility, update options, and price. The work is still there, but it is more predictable.

The most common AI training-related problems are:

- Unclear provenance. Teams need to know where data came from and what rights apply.

- Weak annotations. Poor labels lead to poor model learning.

- Format inconsistency. Mixed file types and incomplete metadata slow integration.

- Unnecessary duplication. Repeated assets can distort training results.

- Compliance exposure. Data that looks usable may not be actually safe for commercial deployment.

DepositPhotos addresses several of these issues through licensed multimodal data, high-quality annotations, custom dataset support, and end-to-end assistance for successful integration into AI projects.

Build your AI with licensed content

The future of data strategy or why businesses are shifting toward licensed datasets

AI data strategy is rapidly moving from “collect everything” to “collect what you can trust.” The shift is practical and ethical.

As AI moves deeper into commercial products, businesses need datasets that can survive legal review, procurement checks, customer questions, and model audits.

Scraped data may still play a role, but undocumented content scraping is becoming harder to justify for production-grade AI.

Regulation is also pushing the market toward transparency. New frameworks require general-purpose AI model providers to publish summaries of training content and follow copyright-related obligations, creating more pressure for documented data sources.

For businesses, this changes the buying decision. Data is no longer just raw material. It is part of governance, brand safety, and product credibility.

Commercial datasets are better positioned for this environment because they offer structure, rights clarity, metadata, and support. Scraping may deliver volume. Licensed datasets deliver confidence.

Final thoughts

So, is it scraping or buying data?

While both have their benefits and drawbacks, the best data acquisition strategy to build reliable AI systems starts with one question: Will this data still be usable, defensible, and valuable six months from now?

For modern AI teams, the answer increasingly points toward licensed, structured, metadata-rich datasets.

? Get a free AI training data sample from DepositPhotos and discuss the best dataset solution for your project.

Contact us

FAQ

Is data scraping good for AI training?

Data scraping can be useful for collecting raw data, but it requires significant cleaning, labeling, and legal validation before it can be used for AI training.

Why are commercial datasets better than scraping?

Commercial datasets are structured, licensed, and ready for machine learning, reducing legal risk and preparation time.

Can scraped data be used for machine learning?

Yes, but it must be cleaned, labeled, and legally validated before being used in production AI systems.

What is the safest way to get AI training data?

Using licensed commercial datasets is the safest and most scalable option for most enterprise AI applications.